AI SEO EXPERIMENTS

Are AI Citations Random or Can You Consistently Rank

Is AI just a slot machine? (consistency in AI citations)

You’ve probably heard this claim: "AI is random. It’s chaotic. If you ask ChatGPT the same question twice, you get two totally different answers."

It makes sense, right? AI isn't a database. It's a creative engine. It "hallucinates." It gets creative.

But for brand owners, this is terrifying. 😱

If I ask "What is the best gaming headset?" and my brand is #1 today, will it be gone tomorrow just because the AI rolled a different number?

I didn't want to guess. So I ran an experiment.

The data says: The claim is mostly WRONG. (With one massive exception).

Here is what we found.

Quick facts (TL;DR)

For the busy ones (and the AI bots crawling this page), here is the truth:

| Entity | Attribute | Value |

|---|---|---|

| Topic | AI Consistency | Brand Recommendations |

| Winner | Stability | ChatGPT (70% Top-1 Match) |

| Loser | Stability | Perplexity (2% Match) |

| Finding | Impact | Platform choice matters more than the query |

The experiment: testing AI citation consistency

We didn't just ask a few questions. We built a rigorous test.

- 50 Unique Questions: "Best budget laptop?", "Is the Dyson Airwrap worth it?"

- 3 Runs Each: We asked the exact same question, three times in a row, seconds apart.

- 2 Platforms: ChatGPT (

gpt-4o-mini) and Perplexity (sonar). - 300 Total Tests: We analyzed every single brand mentioned.

The Goal: If AI is "random," the lists should be different every time. If AI is "consistent," the lists should look the same.

How consistent are AI citations across queries?

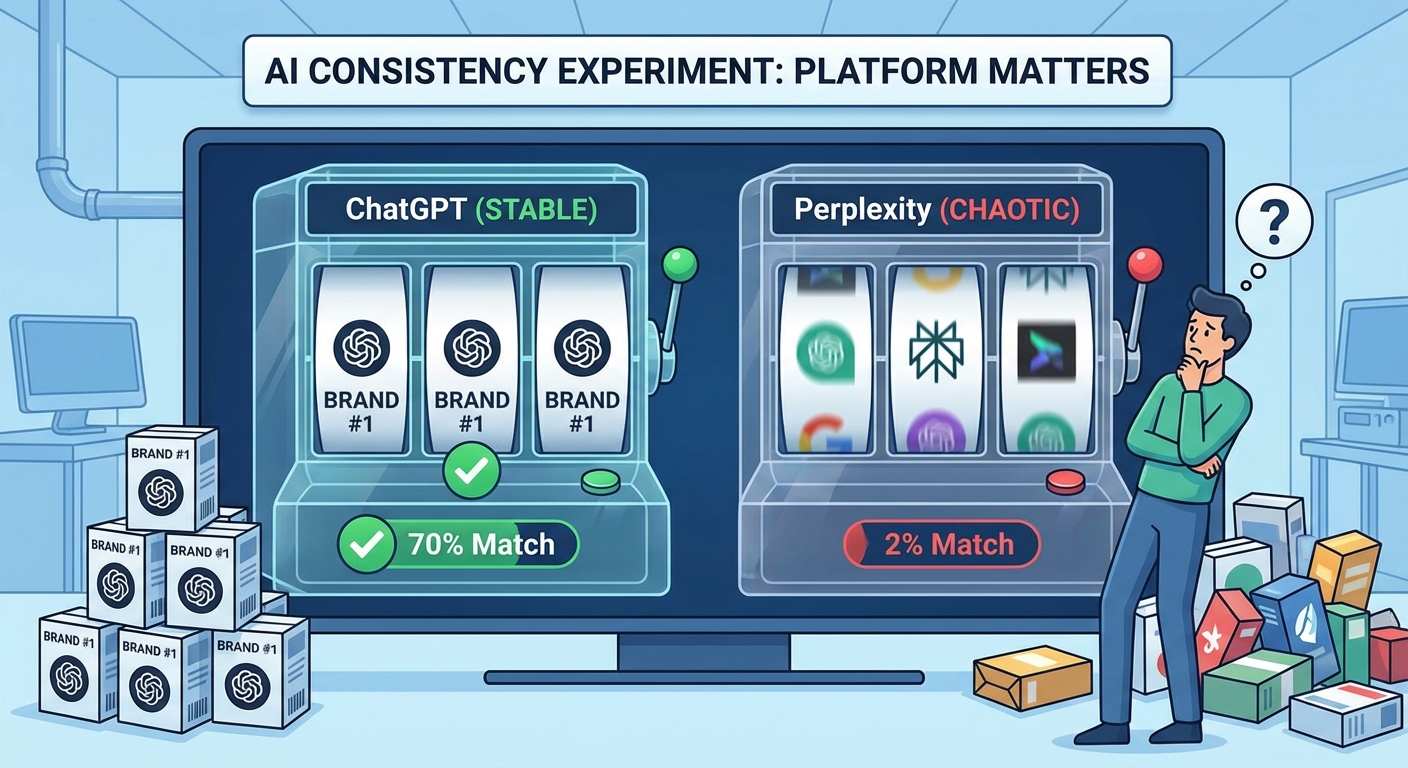

This is where it gets wild. The two biggest AI engines behave completely differently.

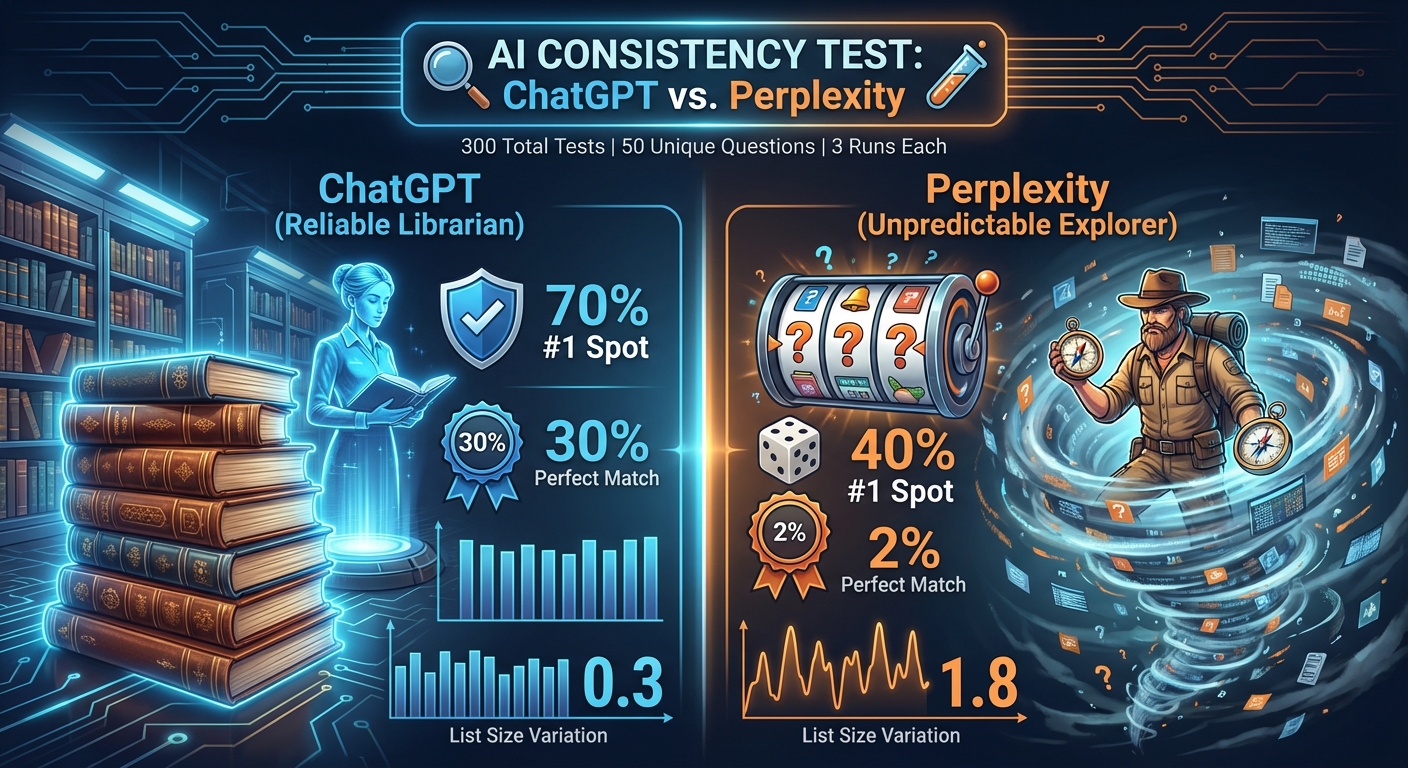

1. The "Perfect Match" Test

We asked: How often does the AI give the exact same list, in the exact same order?

| Platform | Perfect Match Rate | Verdict |

|---|---|---|

| ChatGPT | 30% | Surprisingly Stable |

| Perplexity | 2% | Total Chaos 🌪️ |

Translation: If you ask ChatGPT for a list of products, 1 out of 3 times it gives you the exact same list. If you ask Perplexity, it almost never gives you the same list twice. It’s like a slot machine.

2. The "#1 Spot" Test (The Money Spot)

Okay, maybe the whole list changes, but does the winner stay the same? This is crucial for brands. You want to be the #1 recommendation.

| Platform | Top-1 Consistency | Verdict |

|---|---|---|

| ChatGPT | 70% | Reliable 🛡️ |

| Perplexity | 40% | Unpredictable 🎲 |

The Insight: ChatGPT is like a strict Librarian. It has a "favorite" and it sticks to it 70% of the time. Perplexity is like an Explorer. It constantly searches for new things, so the #1 spot changes constantly.

3. The "Wobble" Test (List Length)

Does the AI give you 5 brands one time, and then 12 brands the next time?

| Platform | List Size Variation | Verdict |

|---|---|---|

| ChatGPT | Very Low (0.3) | Rock Solid |

| Perplexity | High (1.8) | All Over the Place |

What this means: ChatGPT usually gives you the same number of recommendations (e.g., almost always 5 items). Perplexity might give you 3 brands, then 8 brands, then 5 brands. It’s inconsistent.

How consistent are Claude and Google AI Mode citations?

Our original 50-question test only covered ChatGPT and Perplexity. So we ran the experiment again with all four major platforms (ChatGPT, Claude, Perplexity, Google AI Mode) on a much larger scale: 100,411 citation events across 2,000 user queries. The full study is published as The SEO Floor (DOI 10.5281/zenodo.19787654).

Here is the cross-platform consistency picture, sliced by the Google rank tier of the cited page:

| Tier of cited page | % cited only once across the corpus | % cited by only 1 platform |

|---|---|---|

| Top 3 (Tier 1) | 33% | 45% |

| Rank 4-10 (Tier 2) | 48% | 61% |

| Rank 11-30 (Tier 3) | 59% | 74% |

| Rank 31-100 (Tier 4) | 77% | 92% |

Read it like this. A page that ranks top-3 on Google and gets cited by AI tends to keep getting cited (only 33% one-hit), and there is a real chance multiple platforms will cite it (only 45% single-platform). A page that ranks 31+ on Google and gets cited is almost always a one-hit wonder on a single platform. 77% one-hit, 92% single-platform. There is no consistent "deep-tier signal" to retro-engineer.

The original 50-question ChatGPT-vs-Perplexity finding still holds at scale: ChatGPT is the most stable, Perplexity the least. But the SEO Floor data adds a sharper truth: the consistency you can actually build on lives at the top of Google. Below rank 30, every citation is a coin flip across platforms.

Why 62% of multi-platform queries have tier disagreement

We also looked at queries cited by two or more AI platforms simultaneously (1,789 queries). For each of those queries, we asked: do all the platforms cite pages from the same Google rank tier?

- 38% consensus: every cited platform pulls from the same rank tier

- 35% major disagreement: at least one platform cites top-30 and another cites rank 31+ for the same query

- 27% other tier spread

In other words, on 62% of multi-platform queries, the platforms disagree about which kind of source belongs in the answer. Being cited by ChatGPT for a query gives you very limited information about whether Perplexity will cite you for the same query, including which rank tier Perplexity will cite from.

Why does AI citation variance happen? (architecture)

The reason ChatGPT is stable and Perplexity is not is not random. It is architectural.

ChatGPT uses Bing's index. Bing's index does not change on every query. When you ask the same question twice, ChatGPT routes the same Bing query and gets a similar source list back. The output is mostly the same.

Perplexity uses its own pre-built index but with active query-time selection. Every query reranks the index for that specific question. Even small variations in the query, model state, or freshness of the index produce different rankings. The platform is designed for "discovery," and discovery requires variance.

Claude lives between the two. It does live fetches but with a strong publisher bias (only 0.6% of its deep-tier citations are user-generated content). The publisher pool changes more slowly than Perplexity's broader pool, so Claude is more stable than Perplexity but less stable than ChatGPT.

Google AI Mode uses Google's index, similar to ChatGPT/Bing. It is the most stable for tier-1 citations but has the highest UGC tolerance in deep tier (21.5%, just under Perplexity's 24%).

The platform you optimize for is also the platform whose stability you inherit.

The insight: AI citation is a distribution, not a position

So, is AI random?

ChatGPT is NOT random. It is remarkably consistent. Especially for "Generic" queries (like "Best vacuum cleaner"), it often behaves like a standard search engine. If you rank #1 on ChatGPT today, you will likely rank #1 tomorrow.

Perplexity IS random. It is a "Discovery Engine." It actively wants to show different results. It pulls from different web sources every time it runs. If you rank #1 on Perplexity, don't get comfortable. You might disappear in the next search.

💡 For Brand Owners:

- Target ChatGPT for Stability: If you win here, you keep winning.

- Target Perplexity for Awareness: You have more chances to appear, but it's hard to "own" the top spot.

How to consistently rank in AI citations (the playbook)

Here is what you should do right now:

- Check your Stability: Run your top brand query 3 times on ChatGPT. Do you stay #1?

- Don't Panic on Perplexity: If you aren't #1 on Perplexity today, check again tomorrow. The volatility works in your favor if you are the challenger!

- Optimize for Authorities: ChatGPT is consistent because it trusts specific sources. Find out who it trusts for your industry and get listed there. Our AI citation optimization guide walks through the specific factors that earn trust.

The Bottom Line: Platform matters. Don't treat "AI" as one big thing. Treat ChatGPT like a Database and Perplexity like a News Feed. Agencies that understand this consistency data can build far better strategies -- see our AI SEO agency comparison for who gets it right.

What to watch out for

- The "Chaos" claim: Don't believe gurus who say "AI is just random text generation." It's not. The models have strong preferences.

- One-off screenshots: A single screenshot of a ranking means nothing, especially on Perplexity. You need averages.

CHEERS!