AI SEO Experiments

The 1.4% Overlap: Why Everything You Know About AI SEO Is Wrong

Most people doing AI SEO are operating on a massive, expensive assumption. They think, "If I'm the best source, the AI will cite me." They spend thousands on "quality content" and "Domain Authority," expecting ChatGPT or Perplexity to recognize their brilliance.

But the data tells a different story. Across our 100,411-event SEO Floor study and an earlier 60-run consensus experiment, the same pattern shows up: AI platforms looking at the same query do not see the same internet. ChatGPT and Perplexity overlap on cited URLs only 1.4% of the time. Among multi-platform queries (where two or more AI platforms cited at least one source for the same query), 62% have meaningful tier disagreement. Different platforms pull from different source pools, weight rank differently, and tolerate UGC differently.

This post walks through both experiments. The original small-N "consensus scoring" run gave us the first signal. The 100,411-event scale-up confirmed the pattern and added the architectural explanation: it is not random, it is mechanically structural. AI "truth" is not about quality as we knew it. It is about repeatability across architectures.

🧪 THE EXPERIMENT: 60 RUNS, 20 TOPICS, 3 ADVERSARIES

I didn't want "vibes." I wanted hard data. So, I wrote a set of Python scripts 🐍 to track how different AI engines behave when things get controversial.

I picked 20 highly polarized research questions - the kind of topics where there is no easy answer and everyone is fighting for the top spot. We're talking about things like vaccine policy, immigration impacts, and content moderation.

Here was the setup:

• The Engines: ChatGPT (via Bing search), Perplexity (proprietary index), and Google search (via custom search engine).

• The Repetition: I ran every single query 3 times each to measure stability. That’s 60 total runs.

• The Goal: I wanted to see if they cited the same domains, how often they relied on government sources, and how much they changed their minds.

I built a custom classification system to label the behaviors I saw. I wasn't just looking for URLs; I was looking for patterns. Was the AI in "Authority Mode" (blindly trusting .gov sites)? Was it in "Consensus Mode" (picking whatever was repeated most)? Or was it just "Volatile" (spinning a new web every time I hit enter)?

The results were NOT what I expected. Not by a long shot.

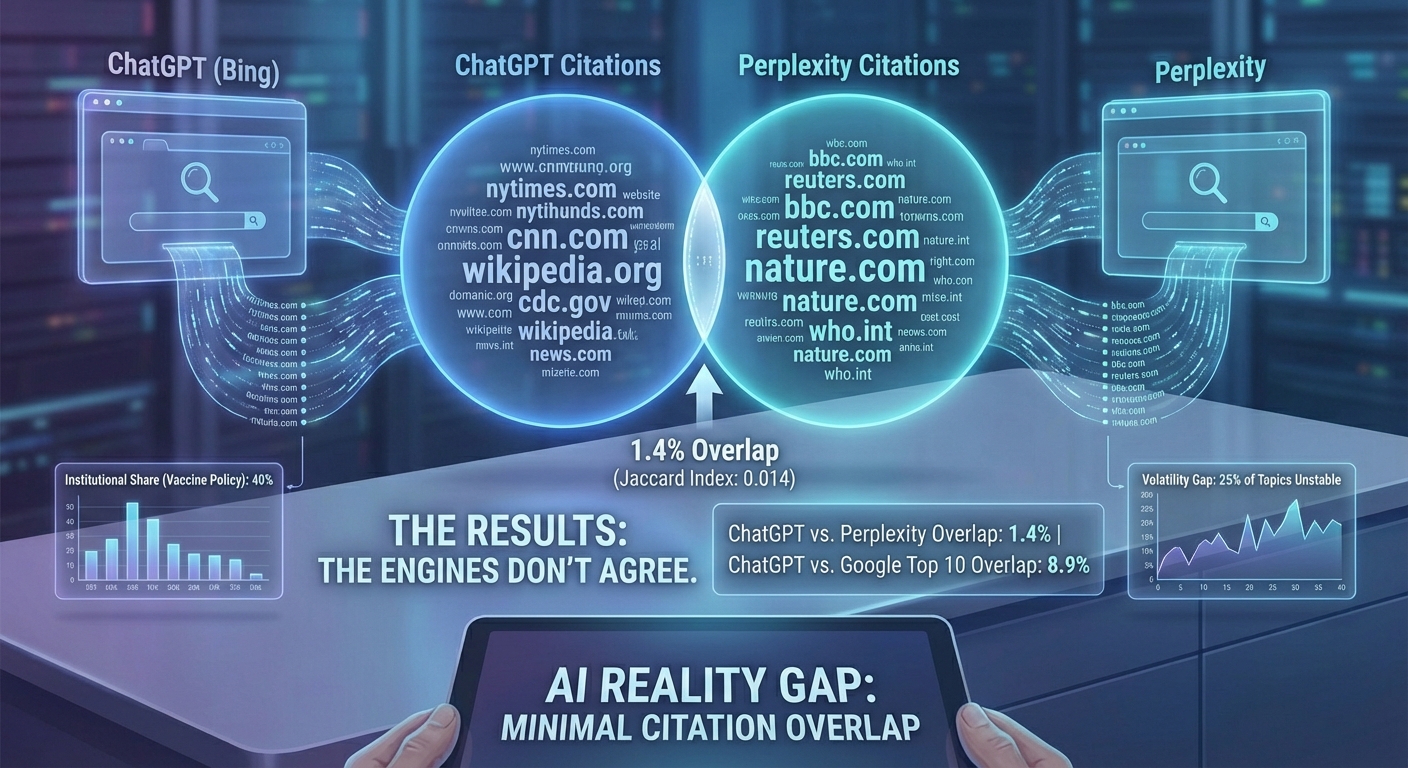

📊 THE RESULTS: THE ENGINES DON’T AGREE (ON ANYTHING)

If you think winning at Google SEO means you're winning at Perplexity, I have some bad news for you.

The highlight of the entire study was the Jaccard similarity index -a fancy way of saying "how much do these sets overlap?" When I compared the citations from ChatGPT vs. Perplexity, the overlap score was a staggering 0.014.

The Insight: ChatGPT and Perplexity almost never cite the same domains. They are looking at two different versions of reality.

| Metric | Findings |

|---|---|

| ChatGPT vs. Perplexity Overlap | 1.4% |

| ChatGPT vs. Google Top 10 Overlap | 8.9% |

| Avg Citation Stability | 19.5% |

| Highest Hedging Rate | 8.65 (Vaccine Policy) |

Here's what else the data revealed:

1. Google ≠ AI Citations

You can rank #1 on Google and still be invisible to AI. ChatGPT’s citations overlapped with Google’s top results only about 8.9% of the time. If you’re optimizing only for the blue links, you’re missing the AI boat.

2. The "Authority Override"

On high-stakes topics like vaccine policy (t05), the AI goes into "Official Mode." About 10% of the topics showed this behavior where models would cite .gov, .edu, or .int domains -even if there was zero consensus among other sources. For vaccine policy, the institutional share was nearly 40%.

3. Consensus Scoring is Real

In about 20% of the topics, I saw "Weak Consensus Wins." This is where the AI picked a source not because it was the most "authoritative," but because it was the only one that appeared across multiple retrievers. Topic t01 (Immigration) had the highest consensus share at 28.7%.

4. The Volatility Gap

25% of the topics were completely unstable. I’d run the same search three times and get three totally different sets of citations. If your citations swing wildly, it means the AI hasn't found a "citable truth" yet.

📈 THE LARGER STUDY: 100,411 EVENTS CONFIRM THE PATTERN

The original 60-run experiment was small. We later ran a much bigger version: 100,411 AI citation events across 2,000 user queries on all four major platforms. The findings are published as The SEO Floor (DOI 10.5281/zenodo.19787654).

The "platforms don't agree" pattern got sharper at scale. On the 1,789 queries where two or more AI platforms cited at least one source, here is what we saw:

| Pattern across multi-platform queries | Share |

|---|---|

| All citing platforms pulled from the same Google rank tier | 38% |

| At least one platform cited top-30 AND another cited rank 31+ | 35% |

| Other tier spread | 27% |

62% of multi-platform queries have meaningful tier disagreement. Being cited by ChatGPT for a query gives you almost no information about whether Perplexity will cite you for the same query, including whether the cited tier will even be in the same neighborhood.

The Jaccard 0.014 ChatGPT-vs-Perplexity overlap from the original test is consistent with this. They are not random, but they are looking at substantially different source pools.

🧬 WHY THE OVERLAP IS SO LOW: ARCHITECTURE, NOT QUALITY

The original 60-run experiment treated the divergence as a mystery. The follow-up data points to a clean architectural explanation. Each platform pulls from a different source pool, and those pools overlap less than people assume.

Look at the share of UGC and social citations within each platform's deep-tier (rank 31+) citations:

| Platform | UGC share of deep-tier citations |

|---|---|

| Claude | 0.6% (effectively zero) |

| ChatGPT | 16.3% |

| Google AI Mode | 21.5% |

| Perplexity | 24.3% |

Claude effectively excludes Reddit, YouTube, and forum content from deep-tier retrieval. Perplexity leans on UGC for one in four deep citations. ChatGPT and Google AI Mode are intermediate. The platforms are not drawing from the same web.

This means consensus is not a single thing. There is a publisher-side consensus (which Claude, ChatGPT, and Google AI Mode all share heavily) and a community-side consensus (which Perplexity heavily uses, ChatGPT moderately, and Claude not at all). A piece of content can be the consensus answer in one and absent from the other.

The strategic implication: building "consensus" by getting your story repeated everywhere needs to span both publisher coverage AND community discussion (Reddit, YouTube reviews, forum AMAs). Content marketing alone gets you to publisher consensus. Community engagement gets you to UGC consensus. You need both to be the consensus answer across all four platforms.

🎯 THE 77% ONE-HIT WONDER PROBLEM

Here is one finding that reframes "winning consensus" entirely. Most pages that get cited deep in the SERP (rank 31+) are cited exactly once. Across our 100,411 events:

| Tier of cited page | % cited only once | % cited by only 1 platform |

|---|---|---|

| Top 3 (Tier 1) | 33% | 45% |

| Rank 4-10 | 48% | 61% |

| Rank 11-30 | 59% | 74% |

| Rank 31-100 | 77% | 92% |

77% of deep-tier cited pages are one-hit wonders. No second citation, no second platform. The model retrieves them once and then never returns to them. This is the opposite of consensus. It is fuzzy long-tail retrieval into a sea of 43,000+ unique URLs.

Top-3 cited pages, by contrast, function as stable answer sources. They get cited multiple times and across multiple platforms. They are the "consensus" pool the original experiment was looking for.

The honest version of "winning consensus": the only place consistent citations live is the top of Google. Below rank 30, every citation is a coin flip. The Lily-Ray-aligned position from the broader study ("indexation is the gate, ranking is the differentiator") shows up sharply in the consensus data.

🧠 THE INSIGHT: TRUTH IS REPEATABILITY

So, why does any of this matter? Because it proves that "ranking" is the wrong framework for the AI age.

In traditional SEO, you're competing for a slot in a list. In AI SEO (or AEO/GEO), you're competing to be retrieved and trusted by a model that is trying to summarize a mess of information.

The AI doesn't want to show the user 10 links. It wants to give them one answer. To give that answer confidently, the model looks for consensus. If three different bots pull from different corners of the web and all find your specific data point, you win.

The Bottom Line: You’re not trying to be the "best" page. You’re trying to be the most repeated fact.

Backlinks used to be the "votes" of the internet. In the AI world, citations and mentions are the new currency. But it’s not just about getting more links; it’s about getting your specific claims, tables, and definitions repeated across different types of platforms.

The AI wants to hedge. It wants to say "Studies suggest..." or "According to [Source]..." If you provide a "citable object" -a specific, quoted sentence that is reinforced by other reputable sites -you become the "truth" the AI is looking for.

🛠️ THE PLAYBOOK: HOW TO WIN ACROSS ALL FOUR PLATFORMS

The old playbook of "write long content and get backlinks" still gets you to the SEO gate, which captures about 25% of citations (Study A). To reach the additional ~17% repeatable deep-tier cohort and to span the architectural divide between platforms, you need a multi-channel approach.

1️⃣ Earn Your SEO Gate

Top-3 Google pages are roughly 34x more likely to be cited than rank 31-100 pages for the same query (Study A). Rank is the dominant per-page lever. Traditional SEO is the foundation. There is no shortcut around the gate.

2️⃣ Schema Breadth, Not Just Schema Presence

Schema markup is the strongest single content-level lever (5-type sum OR=1.31, controlling for rank). Deploy multiple relevant types per page (Article + FAQ + Organization at minimum, Product/Review where applicable). Skip Article schema on opinion content. Generic "has any schema" is non-significant; the breadth matters.

3️⃣ Create "Citable Objects"

AI models love content that is easy to summarize. Use tables, clear definitions, bulleted lists, and short, punchy claims. Don't bury your main point in a 2,000-word ultimate guide.

4️⃣ Use the "Inverted Pyramid" Format

Put the answer at the top. The retriever pulls the top of the content first; make sure it finds what it needs. Answer-first coverage (query terms in the first 200 words) shows OR=1.09 in our regression.

5️⃣ Span the Architecture Divide

If your audience uses Claude, invest in publisher coverage and editorial-quality review sites (Reddit threads will not help — Claude's deep-tier UGC share is 0.6%). If they use Perplexity, Reddit AMAs, YouTube reviews, and forum presence pay off (Perplexity's deep-tier UGC share is 24.3%). For ChatGPT and Google AI Mode, run a mix.

6️⃣ Build Domain-Level Concentration

Niche specialization beats generalist breadth in the deep-tier cohort. High-repeat-cited domains average 15 to 50 substantive pages on a single topic vertical, with site-wide editorial-quality technical hygiene (97.7% meta description coverage, 96.8% canonical coverage). Your domain becomes the consensus answer for a tight topic, not a thin presence across many.

7️⃣ Test Your Own Volatility

Run your own brand name or key topic through ChatGPT, Claude, Perplexity, and Google AI Mode (5 times each). Track which platforms cite you, which UGC sources they pull, and which consensus pool you fall into. If you only show up on one platform, your distribution is too narrow.

⚠️ WATCH OUT FOR: THE RANDOMNESS TRAP

Don't get discouraged if you do everything right and still don't show up.

Our data showed that 25% of topics are just unstable. Sometimes the AI fetches from a legacy index, sometimes it hits a live scraper, and sometimes it just hallucinates a source.

The kicker? You can't control the AI. You can only control the environment the AI retrieves from.

If you provide the most "citable" answer and distribute it widely enough to create a "consensus," you vastly improve your odds. But randomness is a feature, not a bug, of these systems.

🤔 ANYTHING I MISSED?

This experiment was a wake-up call for me. The idea that we can just "do SEO" for one engine and succeed is officially dead. We are entering the era of Multi-Engine Presence.

Dominance in OpenAI doesn't guarantee you a spot in Perplexity. You have to optimize for the fact, not just the keyword.

I'm going to keep running these tests. The next one is going to focus on Multi-Language Consensus -does ChatGPT trust different things in Spanish than it does in English? I have a feeling the results will be even more divided.

CHEERS!