AI SEO EXPERIMENTS

ChatGPT and Bing: AI Citation Research Reveals the Real Source Number Is 27%, Not 87%

A widely-cited study says 87% of ChatGPT's sources come from Bing search results. We ran our own test with 400 questions and found the real number is 27%. And two-thirds of what ChatGPT cites does not come from any search engine at all.

In February 2025, Seer Interactive published a study that sent shockwaves through the SEO industry. Their finding: 87% of ChatGPT's cited sources match Bing's top search results. The implication was huge. If true, it means the path to getting cited by ChatGPT is simple: rank well on Bing.

Most SEO teams do not even have Bing Webmaster Tools set up. So if this claim is true, it changes everything.

We decided to test it ourselves.

🔍 WHAT SEER FOUND (AND WHY IT WENT VIRAL)

Seer Interactive (authors: Christina Blake and Alisa Scharf) analyzed 100 queries and 500+ citations from ChatGPT. They matched those citations against both Google and Bing search results using their professional SERP tracking tool, SeerSignals. Their results:

| What they measured | Result |

|---|---|

| ChatGPT citations that match Bing's top 20 | 87% |

| ChatGPT citations that match Google's results | 56% |

The conclusion seemed obvious: Bing matters way more than Google for ChatGPT. This finding was repeated in conference talks, pitch decks, strategy documents, and hundreds of blog posts. It became one of the most-cited statistics in AI SEO.

But there was a detail hiding in the data that nobody talked about.

🧪 WHAT WE DID DIFFERENTLY

We ran a bigger version of the same test. But we added one critical thing that changes the entire analysis: fan-out query tracking.

Here is what we did:

Ran 400 questions through ChatGPT (250 from a balanced research set covering 10 topics and 5 question types, plus 150 extra "best X" shopping questions)

Captured the INTERNAL searches ChatGPT makes before answering. This is the key part nobody else measures. More on this in the next section.

Searched Bing for both the original questions AND all the internal sub-questions ChatGPT generated (642 unique sub-queries total)

Searched Google for all 250 original questions

Matched ChatGPT's citations against each set of search results at the domain level

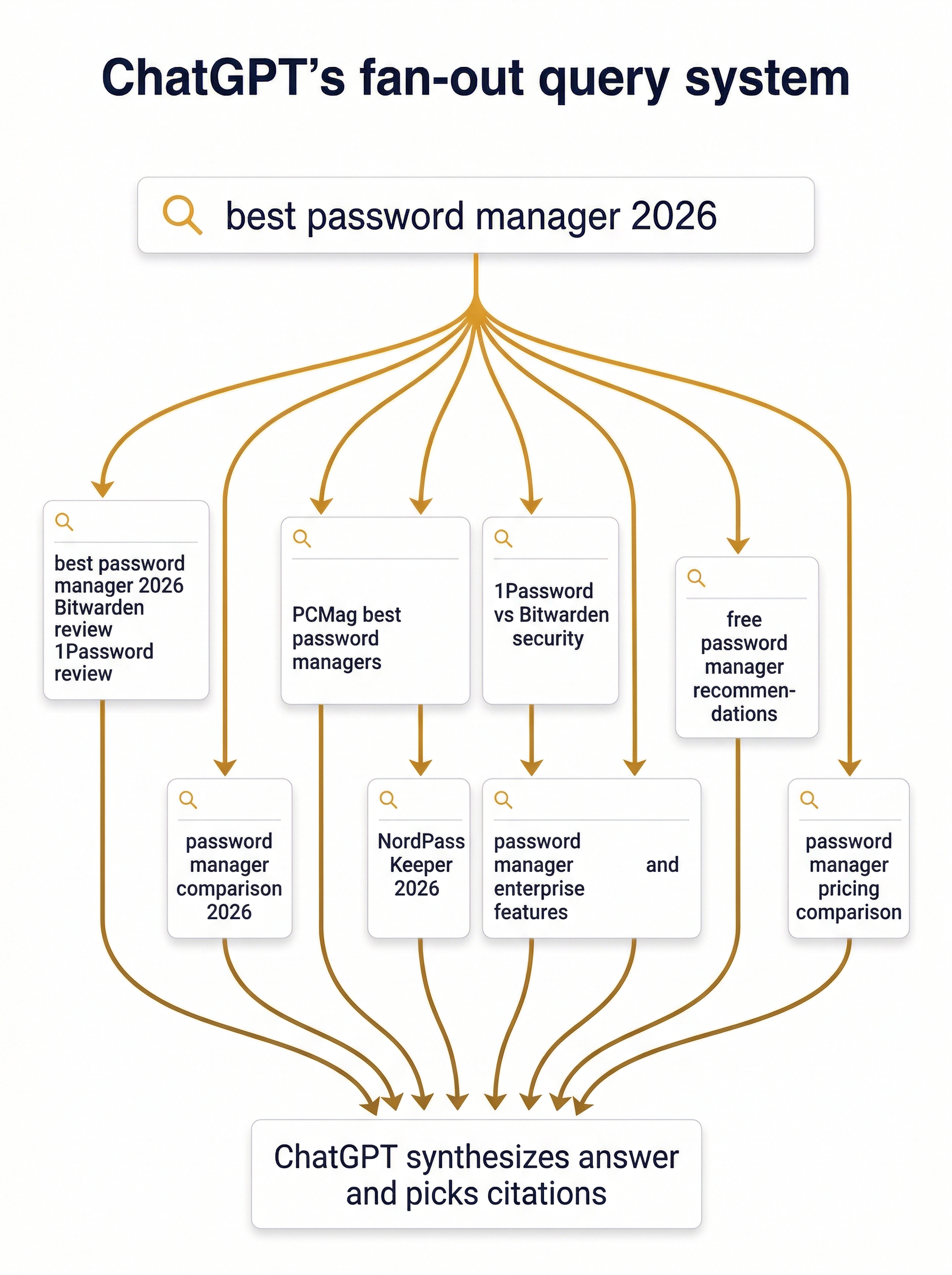

🌊 THE HIDDEN MECHANISM: FAN-OUT QUERIES

This is the most important concept in this entire post. If you understand this, you understand why the 87% number is misleading.

When you ask ChatGPT a question, it does not just search Bing once for your exact words.

Instead, ChatGPT breaks your question apart and searches Bing multiple times with different versions of your question. We call these "fan-out queries" because one question fans out into many searches.

Here is a real example from our data. We asked:

"best password manager 2026"

ChatGPT did not search Bing for "best password manager 2026." It generated 8 separate searches:

- "best password manager 2026 Bitwarden 1Password Dashlane LastPass review"

- "2026 password manager comparison Bitwarden 1Password Keeper NordPass"

- "PCMag best password managers 2026"

- "1Password vs Bitwarden security"

- "NordPass Keeper review 2026"

- "password manager enterprise features"

- "free password manager recommendations"

- "password manager pricing comparison"

Each of those 8 searches returns different Bing results. ChatGPT's final citation could come from ANY of those searches, not just the one you typed.

Why does this matter for the 87% claim?

If you only check whether ChatGPT's citations match Bing results for the original question ("best password manager 2026"), you miss most of the matches. The citation might match Bing results for sub-query #4 ("1Password vs Bitwarden security") but not for the original question. If you do not check sub-query #4, you think the citation did not come from Bing. But it did.

This is likely what explains the gap between studies. Seer used a professional tracking tool (SeerSignals) that may have captured these internal searches. Most replication attempts (including our early tests) only check the original question, which dramatically undercounts the Bing connection.

For a deeper dive into how fan-out queries work, see our full explainer on ChatGPT's fan-out system.

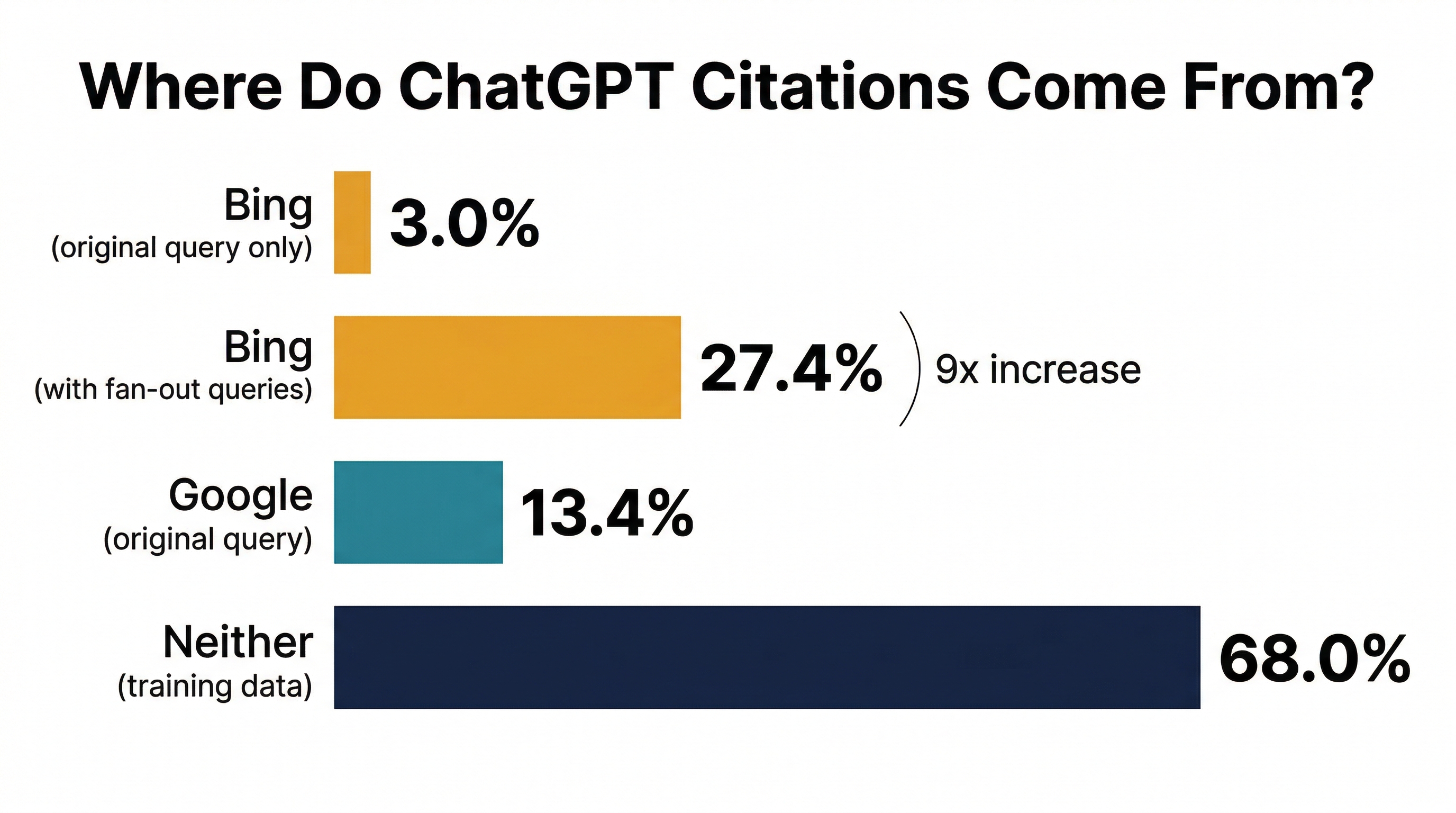

📊 THE RESULTS: 27%, NOT 87%

Here is what we found when we matched ChatGPT's citations against search engine results:

| What we matched against | Match rate |

|---|---|

| Bing results for the original question only | 3.0% |

| Bing results for original + all fan-out sub-queries | 27.4% |

| Google results for the original question | 13.4% |

| Neither Bing nor Google | 68.0% |

Let us break down what each number means.

3.0%: The number you get if you do not track fan-out

If you only check whether citations match Bing results for your exact question, the match rate is a tiny 3%. This is misleadingly low. It is like trying to catch fish but only looking in one small corner of the lake.

27.4%: The real Bing connection

When we included ALL the internal searches ChatGPT ran (not just the original question), the Bing match rate jumped to 27.4%. That is a 9x increase. Fan-out queries are the key variable.

This confirms Seer's directional finding: Bing does matter more than Google for ChatGPT citations. Bing (27.4%) outperforms Google (13.4%) by about 2x when you account for fan-out.

13.4%: Google still plays a role

Even though ChatGPT officially searches Bing (through the Microsoft/OpenAI partnership), 13.4% of its citations match Google's top 20 results. This makes sense because many high-quality pages rank well on BOTH search engines. If a page ranks #3 on both Google and Bing, it shows up in both match calculations.

68.0%: The elephant in the room

This is the number nobody talks about. Two-thirds of what ChatGPT cites does not match any search engine's top 20 results for any query we tested. These citations come from ChatGPT's training data: URLs baked into the model's memory during training.

No amount of Bing optimization will influence these citations. They were determined months or years ago when the model was trained.

🤔 WHY OUR NUMBER (27%) IS DIFFERENT FROM SEER'S (87%)

We did not get the same number as Seer. Here is why the gap likely exists:

| Factor | Our study | Seer's study | Effect on match rate |

|---|---|---|---|

| Model used | GPT-5.4-nano (cost-efficient, fewer sub-queries) | Whatever was current in early 2025 | Our model may search less aggressively |

| Sub-queries per search | Average 4.7 | Unknown (likely more) | Fewer sub-queries = fewer Bing matches |

| Query types | 400 mixed (informational, shopping, comparison, review, validation) | 100 (likely skewed commercial) | Commercial queries trigger more web search |

| SERP tracking | Thordata scraper API | SeerSignals (professional tool) | Their Bing data may be more comprehensive |

| Web search trigger rate | 35% of queries triggered search | Not reported | 65% of our queries used training data only |

| Time period | April 2026 | Early 2025 | ChatGPT's search behavior may have changed |

The biggest factor is probably query type. Seer's 100 queries skewed toward commercial/shopping queries ("best auto loan lenders"). These are exactly the queries where ChatGPT searches the web most aggressively. Our 400 queries included informational questions ("what is quantum computing") where ChatGPT often answers from memory without searching at all.

What Seer got right: The direction. Bing matters more than Google for ChatGPT. Our data confirms this.

What Seer's number overstates: The magnitude. 87% implies almost everything ChatGPT cites comes through Bing. Our data says it is closer to 27%, with the majority coming from training data.

🧠 THE TRAINING DATA PROBLEM: 68% OF CITATIONS COME FROM MEMORY

This is the most underreported finding in this entire space.

When ChatGPT answers a question, it does not always search the web. In our test, only 35% of queries (136 out of 400) triggered any web search at all. For the other 65%, ChatGPT answered entirely from memory, citing URLs it learned during training.

Even when ChatGPT DOES search the web, it still mixes in citations from training data. The result: 68% of all citations in our study came from neither Bing's top 20 nor Google's top 20.

What this means for you: If your website was in ChatGPT's training data (which was assembled months ago), you might get cited regardless of your Bing or Google rankings. If your website is new or was not in the training data, you need to get into Bing's index so ChatGPT can find you when it does search. But even then, you are competing for only about 27% of the citation slots.

✅ WHAT SITE OWNERS SHOULD ACTUALLY DO

Based on our data, here is the priority list, from most important to least:

Priority 1: Get indexed in Bing (table stakes, takes 10 minutes)

This costs nothing and ensures you are in the pool when ChatGPT searches. Steps:

- Set up Bing Webmaster Tools

- Submit your sitemap

- Enable IndexNow for faster indexing

This is necessary but not sufficient. Being in Bing's index does not guarantee citation. It is like buying a raffle ticket. You cannot win if you do not have a ticket, but having a ticket does not mean you win.

Priority 2: Rank well on Google (the quality signal)

This sounds backwards, but our data shows Google ranking predicts ChatGPT citation better than Bing ranking:

| Ranking | ChatGPT citation rate |

|---|---|

| Google top 3 | 20.4% |

| Bing top 20 | 4.9% |

Why? Because the quality signals Google uses to rank pages (comprehensive content, good structure, authoritative sources) are the same signals ChatGPT uses to select which Bing results to actually cite. Google ranking is a proxy for content quality that ChatGPT values.

For the full breakdown of Google ranking vs AI citation, see our 10,293-page study.

Priority 3: Build topical authority (the biggest lever)

Our Experiment M research found that the single strongest predictor of AI citation is how many related searches your website ranks for:

| Questions your site ranks for | AI citation rate |

|---|---|

| 1 question | 34% |

| 4 to 7 questions | 87% |

| 16+ questions | 100% |

Build a cluster of 10 to 15 pages around your topic. Each page targets a different question people ask. Interlink them. This builds the topical authority signal that AI platforms trust.

Priority 4: Optimize your page content (the page-level levers)

Among pages at the same search rank, the ones with these features get cited more:

- Comparison structure ("X vs Y" tables, side-by-side breakdowns) was the #1 content signal

- The exact search words in the page, early in the content

- Deep subheadings (lots of H3 headers)

- Statistics and real data (pages cited by all 3 platforms had 7x more statistics)

- Objective tone (not "I think" blog style)

- FAQ sections with FAQ schema markup

- About 2,000 words

For the full checklist, use our free AI Visibility Quick Check.

What NOT to spend time on

Based on our data, these commonly recommended optimizations showed no effect:

- "Optimizing for Bing specifically" (optimize for Google; the quality transfers)

- Page speed improvements for AI citation purposes (no effect in our data)

- Adding author bylines for AI trust (no effect)

- Adding more backlinks for AI citation (traditional authority metrics are not significant)

❓ FREQUENTLY ASKED QUESTIONS

Should I set up Bing Webmaster Tools?

Yes. It takes 10 minutes and costs nothing. Getting indexed in Bing puts you in ChatGPT's candidate pool when it searches. But do not expect this alone to get you cited. Being in Bing's index is like having a ticket to the lottery. It gets you in, but 68% of the prizes go to training data anyway.

If ChatGPT uses Bing, why does Google ranking predict citations better?

Because Google's ranking algorithm rewards the same content qualities that ChatGPT values: comprehensive coverage, authoritative information, good structure. When ChatGPT gets Bing results, it still evaluates the pages based on content quality. Pages that rank well on Google tend to have the content features ChatGPT prefers, even though ChatGPT found them through Bing.

What are fan-out queries and why do they matter?

Fan-out queries are the internal sub-searches ChatGPT generates before answering your question. Instead of searching Bing once, it breaks your question into multiple reformulated searches and runs them all. This means your page can be found through a search you never targeted directly. For the full explanation, see our fan-out queries explainer.

Can I see what fan-out queries ChatGPT generates for my topic?

Not directly in the ChatGPT interface. We captured them using the OpenAI Responses API, which exposes the internal web_search tool calls. For practitioners, the closest proxy is to think about all the related questions someone might ask about your topic, and make sure your content covers them.

Is the 87% number wrong?

It is not wrong in the way Seer measured it, but it is misleading in how it has been repeated. Seer likely captured more of the Bing connection because their tracking tool may have matched against a broader set of Bing results. But the way most people interpret "87% comes from Bing" (as in: just rank on Bing and you will get cited 87% of the time) is not what the data supports. Our data shows 27% with fan-out tracking, and 68% comes from training data, not any search engine.

🔬 METHODOLOGY NOTES

For researchers who want the technical details:

- 400 queries: 250 balanced (5 intents x 10 verticals) + 150 commercial boost (discovery + review-seeking)

- ChatGPT model: gpt-5.4-nano via OpenAI Responses API with web_search tool

- 136 queries triggered web search (35%), generating 642 unique sub-queries (avg 4.7 per search)

- Bing results: Thordata scraper API, top 20 per query, 98% success rate

- Google results: Google CSE API, top 20, 100% coverage for 250 queries

- Matching: Domain-level with www-stripping and lowercase normalization, per-query

- Seer's study: 100 queries, 500+ citations, SeerSignals SERP tracker, published February 2025

📚 REFERENCES

- Blake, C. & Scharf, A. (2025). "87% of SearchGPT Citations Match Bing's Top Results." Seer Interactive.

- Lee, A. (2026a). "Query Intent and Google Rank as Joint Predictors of AI Citation: A Multi-Platform Observational Study." Preprint v6. DOI

- Lee, A. (2026c). "I Rank on Page 1: What Gets Me Cited by AI?" Preprint. Paper | Dataset

- Aggarwal, P., Murahari, V., Rajpurohit, T., Kalyan, A., Narasimhan, K., & Deshpande, A. (2024). "GEO: Generative Engine Optimization." KDD 2024. DOI